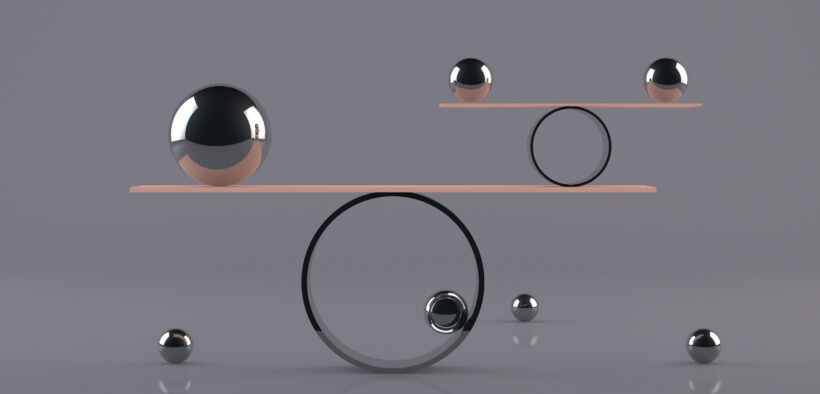

Because so much depends upon the evaluation of a student’s learning and the resulting grade, it is in everyone’s interest to try to make the evaluation system as free from irrelevant errors as possible. Borrowing from the evaluation literature, I propose the four R’s of evaluation—Relevant, Reliable, Recognizable, Realistic—as ways to ensure the quality of our evaluation systems.

Four R’s of Effective Evaluation

- Tags: exams, student learning assessment

Related Articles

I have two loves: teaching and learning. Although I love them for different reasons, I’ve been passionate about...

Could doodles, sketches, and stick figures help to keep the college reading apocalypse at bay?...

We’ve all faced it: the daunting stack of student work, each submission representing hours of potential grading. The...

Storytelling is one of the most powerful means of communication as it can captivate the audience, improving retention...

For some of us, it takes some time to get into the swing of summer. Some of us...

About a year ago, I decided to combine the ideas of a syllabus activity and a get-to-know-students activity....

The use of AI in higher education is growing, but many faculty members are still looking for ways...