During a conversation about evidence-based teaching, a faculty member piped up with some enthusiasm and just a bit of pride, “I’m using an evidence-based strategy.” He described a rather unique testing structure and concluded, “There’s a study that found it significantly raised exam scores.” He shared the reference with me afterward and it’s a solid study—not exceptional, but good.

Related Articles

I have two loves: teaching and learning. Although I love them for different reasons, I’ve been passionate about...

Imitation may be the sincerest form of flattery, but what if it’s also the best first step to...

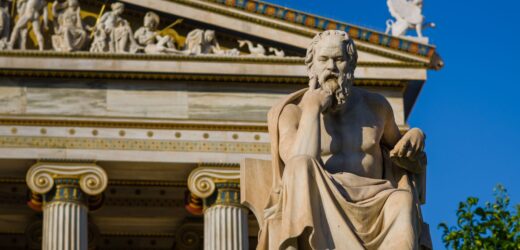

Higher education has long recognized the value of Socratic dialogue in learning. Law schools traditionally adopt it in...

After 35 years in higher education, I continue to embrace the summer as a prime opportunity to strengthen...

Last month I wrote about how students fool themselves into thinking they have learned concepts when they really...

If you’ve ever hesitated to offer feedback to a colleague for fear of creating tension or hurting a...

When I first began teaching online, I thought creating engaging and relevant content was the biggest challenge. And...